Live stream rewind |

2009-05-07

|

To take a break from my personal hacking on CD rippers and jukeboxes, I wanted to hack a little on a Flumotion feature.

Now the feature in question was thought up years ago, just like many features we have. Our problem has never been 'what should we implement', it's always been 'in what order do we implement all the things we could do ?'

Anyway, this particular feature is the idea of being able to request a live stream, but going back in time. So instead of seeing what is on now, you could ask to see what was on 30 minutes ago. And you'd connect, get data from that point back in time, then continue as if it was a live stream.

I of course think all my ideas are great, without question. And I've learned to accept over time getting older that everyone else doesn't realize, and so doesn't pick up on these great ideas I'm having. (Who was it again that said irony doesn't work on the internet ?)

Yesterday, in a boss meeting, I was asked to come up with reasons why we stream over HTTP and not over other protocols. I casually threw in a 'you know, we could do crazy things like rewinding in a live stream, or showing what was on half an hour ago.' Which drew a 'hm, that's interesting' from my boss, instead of a usual grunt while he's typing away on his MacBook and fielding a call on his iPhone.

Today, our product manager mails me and says he heard from our boss about this idea, and told me that would be a killer feature to have. So I dutifully replied to some questions he had.

Now, these days, our development process is a bit more structured, so I have two ways of seeing if this can actually work. I can either create a ticket, draft up some requirements, get it on a roadmap for a development cycle, and work with a developer on the team to explain and help out and maybe have something in a few months.

Or, I could just prototype it myself to see if it works, and go the Far West way!

Coming back tonight from tango class, I had been thinking on how I would actually implement it, and started hacking it in as a proof of concept. Basically, I wanted to extend the burst buffer of multifdsink (the GStreamer element responsible for doing the actual streaming) to, say, an hour, and parse a GET parameter like 'offset' in the HTTP request to start bursting from that time offset in the stream.

An embarassing multifdsink bug fix and some flumotion mutilating later, I have this to show for my evening:

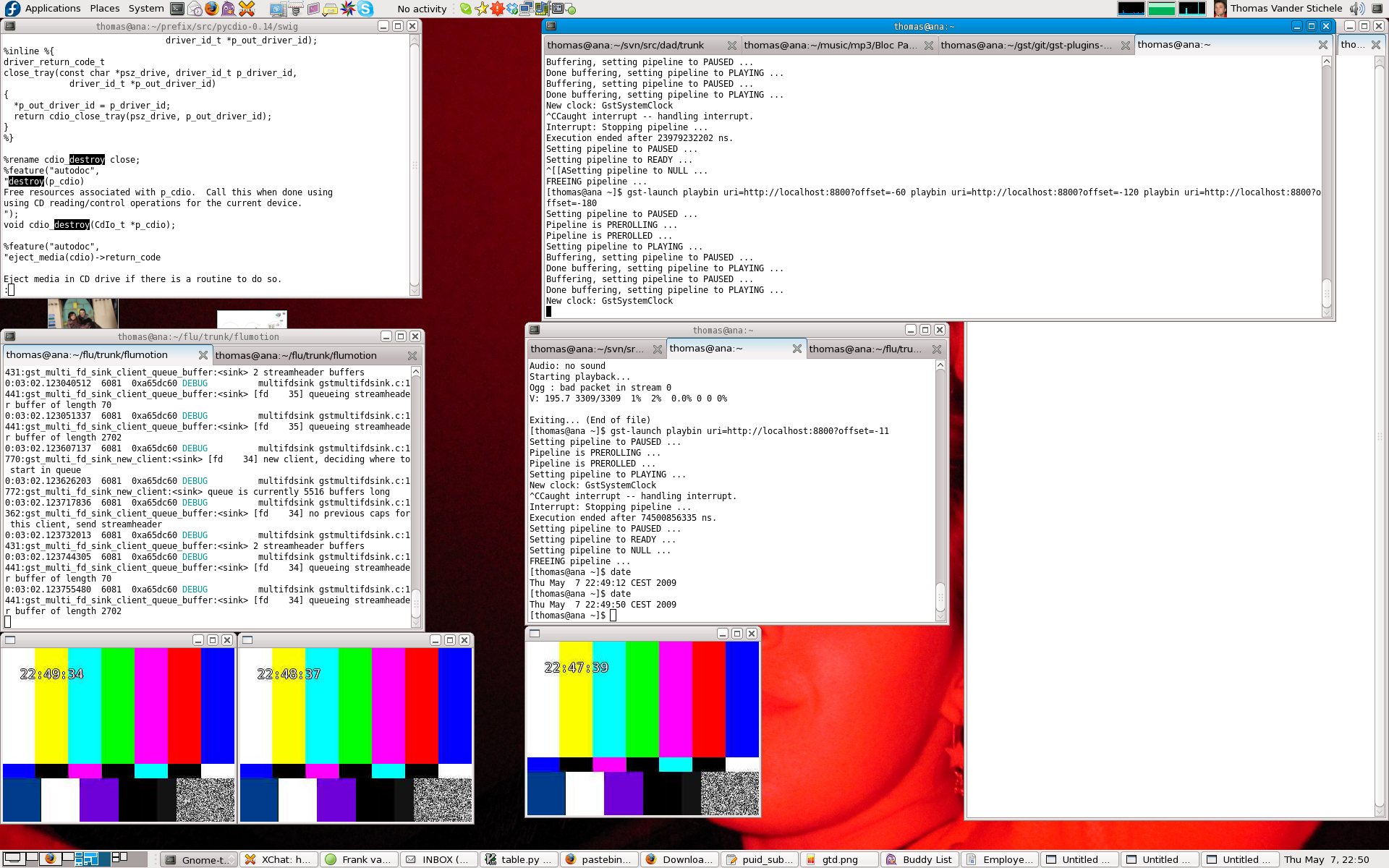

While it doesn't look that exciting, the screenshot shows a flumotion-launch pipeline, and a gst-launch pipeline with three playbin's playing the same live stream, but each with a different offset (a minute away each).

The 3 playback windows thus show 3 streams, roughly offset by a minute from each other. The time displayed is the system time at the time of frame generation. The slight difference from the requested offset is due to the fact that the streams start at a keyframe.

Not bad for a night of hacking.

Now, to actually get this nicely integrated, productized, supportable, and deployed on our platform is another matter. But now I can package up the ugly bits of hacks I did and hand it off to the team for analysis.

Maybe this is what our development manager meant when he said I should go back to hacking Flumotion once in a while?

![[lang]](/images/eye.png)

Pretty neat! But doesn’t at all answer the question about “why HTTP?” – this is (conceptually) just as easy with other protocols. It’s entirely a matter of the server buffering and sending older data, after all…

You guys should get a stable release out! If it has neat functionality like this, even better! It’s been OVER TWO YEARS since the last release of a stable branch!

Comment by Mike Smith — 2009-05-07 @ 23:32

@Mike: why yes of course it’s not an advantage of HTTP as such. It’s only an advantage in the sense that we can do it, and we happen to have it on HTTP, and others can’t yet. So it’s going to last as an advantage until some other server implements it…

Don’t get me started on releasing :) Last time I asked, they said ‘we should skip 0.6 and go straight to 0.7’. Ask me where I heard that before.

Comment by Thomas — 2009-05-08 @ 05:28

Interesting hack!

I am very for releasing of a stable version, because of course it means we’ll all be treated to a nice restaurant!

Comment by Zaheer Merali — 2009-05-08 @ 09:22

If you’re gonna do it with HTTP, GET it with this:

http://blog.gingertech.net/2009/05/03/first-draft-of-a-new-media-fragment-uri-addressing-standard/

Comment by John Drinkwater — 2009-05-08 @ 18:53

I would _love_ to have this for our live air mozilla streams. Go, go, go!

Comment by Christopher Blizzard — 2009-05-08 @ 22:24

Neat hack!

Regarding releasing: why stop at 0.7 if you can go all the way to 7.0? :)

Comment by wingo — 2009-05-09 @ 11:59

After some private discussions with Thomas and John, and within the media fragments working group, I can now share that we have included a media fragment addressing scheme for wall-clock times. You could thus jump back by an hour by grabbing the current streaming time (e.g. 2009-08-26T12:34:04Z) and subtract an hour (gives: 2009-08-26T11:34:04Z) and giving it in a media fragment URI: http://example.org/video.ogv#t=clock:2009-08-26T11:34:04Z . The draft specification is here: http://www.w3.org/2008/WebVideo/Fragments/WD-media-fragments-spec/#clock-time . Now if only there was an implementation… :-)

Comment by Silvia Pfeiffer — 2009-08-26 @ 03:38

[…] A few months back, Thomas reported on a cool flumotion experiment that he hacked together which allows jumping back in time on a live video stream. […]

Pingback by ginger’s thoughts » Media Fragment addressing into a live stream — 2009-08-26 @ 03:58